Most AI UX Is Lazy

And why we love to hate chatbots

Every AI feature shipped in the last two years looks the same.

A chat box, a loading spinner, a disclaimer at the bottom, and the interface is a text field that says “Ask me anything.”

The problem is not the AI. The problem is that product builders don’t ask the right question. They ask “how do I add AI to our product?” They should be asking: “What user problem can I solve now that was previously unsolvable?”

When you skip that question, you get the default: a chatbot. When you answer it, you get a lot more success. My most successful launches using AI were not chat interfaces. Some of them were text fields that turn into checkboxes. Some were descriptions and summaries. Some others were just high performing classifiers. More on that later.

Lazy AI UX comes from lazy AI thinking. Teams start with the interface instead of the capability.

The Four Lazy Defaults

The Chatbot. Everyone’s first instinct. “Chat with your data.” Notion, Salesforce, HubSpot, Shopify. They all shipped it as their flagship AI feature. Chat shifts cognitive load to the user. “Figure out what to ask” is work for the user, that you’ve offloaded from the work you need to be doing. Your product should already know what the user needs. Chat is one AI primitive forced onto every problem regardless of fit. When your travel app, your CRM, your project management tool, and your email client all have the same chat interface, something has gone wrong with the thinking.

The Magic Wand Button. “AI Generate” dropped next to every text field. No context about what it will generate. No way to guide it. No refinement loop. Click, get a blob of text, accept or regenerate. This is another single primitive (generation) with zero UX thought applied to it. The lottery machine approach to product design. Pull the lever, see what comes out, pull it again if you don’t like it.

The Loading Spinner of Trust Destruction. Eight-second wait. No progress indication. Then streaming text appears character by character. Impressive in a demo, anxiety-inducing in production. Users cannot verify something that is still being written. They sit there watching words appear, not knowing whether to trust what they are reading or wait for the next sentence to contradict it. The latency is not a performance problem. It is a trust problem.

The Disclaimer. “AI-generated content may contain errors.” Plastered at the bottom of every output as a substitute for designing verification into the experience. If your UX strategy for handling AI errors is a legal footnote, you did not design UX. You designed liability coverage.

Why Teams Default to Lazy

The problem is structural. These are not bad designers making bad decisions. These are reasonable people responding to bad incentives.

Demo-driven development. Chat demos beautifully in board meetings. Executive types a question. AI answers. Room applauds. The demo becomes the product strategy. But what demos well and what gets adopted are different things. A chatbot that impresses a VP in a conference room can still get low adoption from actual users who have faster ways to get the same information.

Model-out thinking instead of user-back thinking. “We have GPT-4, what can we do with it?” instead of “Users struggle with X, can AI help?” The capability drives the UX instead of the problem driving the capability. This is the root of lazy AI UX. Starting with the tool instead of the user.

The API makes chat easy and everything else hard. The default API interface is messages in, text out. Chat is the path of least engineering resistance. Structured output, proactive surfacing, inline suggestions: all harder to build. So teams ship the easy thing and call it v1.

No established patterns, so teams copy each other. When Notion shipped a chatbot, everyone shipped a chatbot. The industry is cargo-culting UX from whoever shipped first, regardless of whether it worked. Copying someone else’s lazy default does not make it less lazy.

The Right Question: What Can You Do Now That You Could Not Before?

AI gives you a set of primitives. Things that were previously impossible or prohibitively expensive to do at scale. So the question to ask is, you have a capability now that you did not have before. What problems were previously unsolvable, that have now become possible to solve?

Finding the answer to this question requires a deep understanding of the capabilities the tech unlocks. I’m going to list down my mental model here. Yours may be slightly different, but as long as the broad strokes capabilities converge, you have a tool set that you can use to build a foundation on.

The LLM Primitives

1. Intent Detection

What it unlocks: understanding what users mean, not just what they type. Interpreting unstructured input and mapping it to structured actions at scale. This was previously impossible without building thousands of hand-coded rules that broke every time someone phrased something differently.

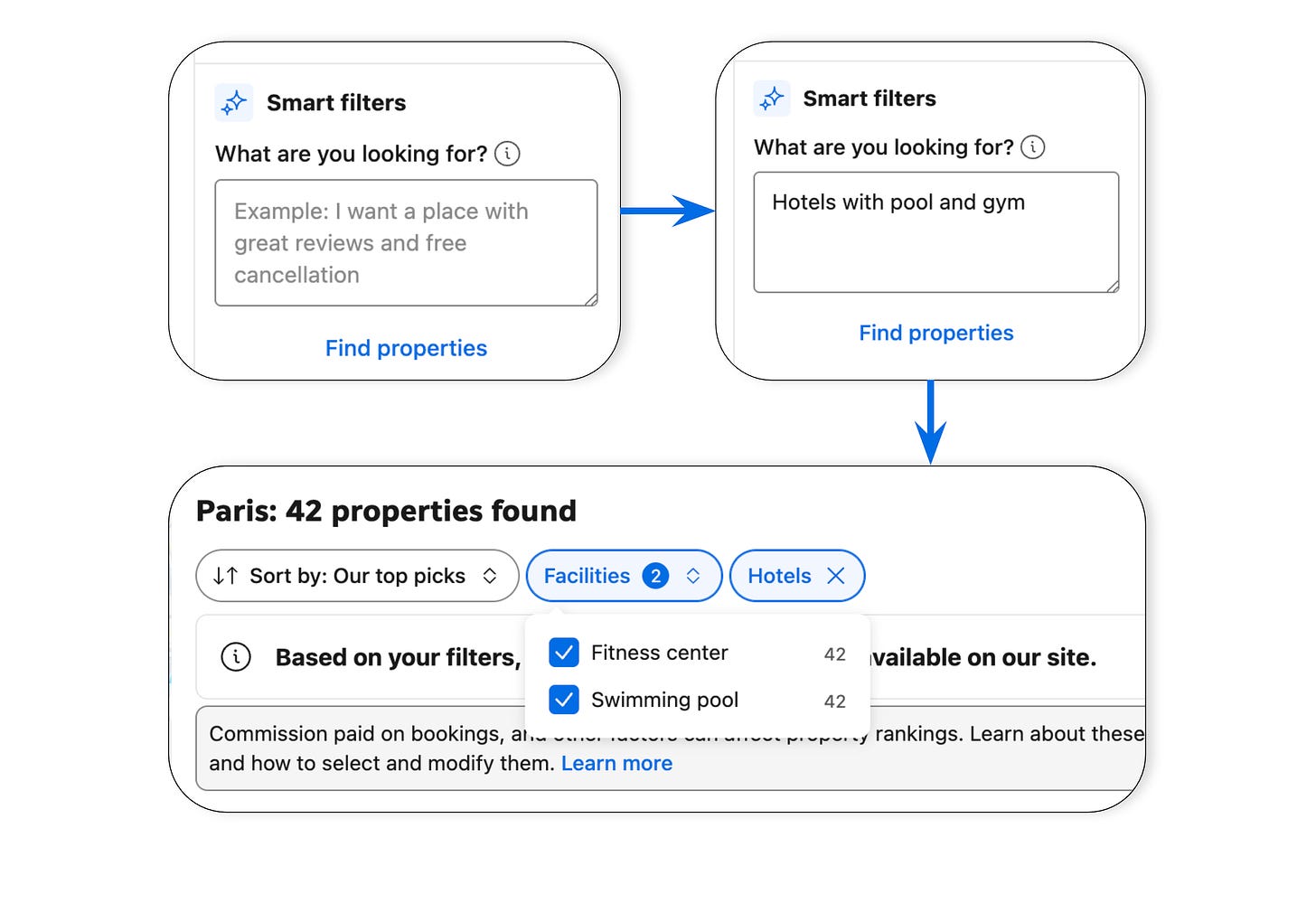

Example: Booking.com Smart Filters. User types “hotels with pool and gym.” The AI maps that to existing structured filters: Swimming Pool and Fitness Center checkboxes, pre-selected. Property type set to Hotels. 42 results. The AI detected intent and translated it to structured action. No chat. No generated text. Full story below.

2. Generation

What it unlocks: creating content that did not exist, at zero marginal cost. First drafts, suggestions, completions, variations. Previously, every piece of content required a human to write it from scratch.

Example: inline drafts with refinement loops. GitHub Copilot suggests a line of code. Tab to accept. Keep typing to reject. The suggestion disappears with zero friction. Cursor takes it further: it shows diffs you can accept or reject line by line. The output is not text to read. It is a decision to make. The user stays in control throughout.

3. Summarisation

What it unlocks: compressing large volumes of information so humans do not have to process all of it. Distilling hundreds of pages, thousands of reviews, or hours of conversation into the parts that matter.

Example: contextual highlights and progressive disclosure on a set of reviews or user generated content. Surface the three things that matter. Let the user drill into detail on the ones they care about. The AI does not just compress information. It prioritises it, based on what the user is trying to do right now. A summary of a 50-page legal contract should look different for the CFO than for the head of legal.

4. Classification

What it unlocks: sorting, routing, labelling, and triaging automatically. Categorising unstructured content accurately without hand-built rules for every scenario.

Example: Linear’s Triage Intelligence. When a new issue comes in, the AI suggests a team, assignee, labels, and related issues. The suggestions are visible. Hover over them to see the reasoning. Accept with one click or override. The interesting thing about classification is that the lazy default is not a bad interface. It is no interface at all. Teams use classification behind the scenes but never surface it as something the user can see, trust, and refine. That is a missed opportunity. Making classification visible turns it into a feature users value.

5. Extraction

What it unlocks: pulling structured data from unstructured input. Reliably extracting specific fields from messy documents, images, or conversations at scale.

Example: auto-populated fields from uploaded documents. Upload an invoice, get line items extracted and pre-filled. Upload a resume, get candidate fields populated. The user reviews and corrects. They are editors, not transcribers. The shift from “type everything” to “verify and fix” is enormous. It turns a ten-minute task into a thirty-second review.

6. Memory

What it unlocks: accumulating context over time and using it to make every other primitive smarter. A system with memory knows that this user always filters for pet-friendly hotels. It knows that this team routes billing bugs to the payments squad. It knows that this reader skips the methodology section.

Memory is the enabling layer. Intent detection with memory gets better at understanding what this specific user means. Classification with memory routes more accurately based on how similar items were handled before. Summarisation with memory knows what this person cares about and prioritises accordingly.

Example: a support agent that already knows your order history, your past issues, your account tier. It does not ask you to explain your problem from scratch. It picks up where the last interaction left off. Every interaction makes the next one faster.

Without memory, every AI interaction starts from zero. With it, the product compounds. This is also why memory is the hardest primitive to copy. Your competitor can use the same model. They cannot replicate what your product has learned about your users.

These six primitives naturally combine. Intent detection figures out what the user wants. Memory recalls what it already knows about them. Classification routes the request. Extraction pulls the relevant data. Summarisation compresses it. Generation produces the response. Orchestrate all six well and you get a conversation. That is why chat makes for impressive demos. It exercises every primitive at once. It is also why chat is the laziest default. You are using all six primitives to do a job that one or two of them could handle better.

The Agent Primitives

These are emerging. The UX patterns are still being invented.

1. Planning and Decomposition

What it unlocks: the user states an outcome. The AI figures out how to get there. Breaking an ambiguous goal into concrete, executable steps.

The lazy version: an agent that narrates its chain-of-thought in a chat window. “First, I will search for... Now I am analysing... Let me think about this...” The user watches the AI think out loud, step by step, for ninety seconds. This is performative reasoning. It looks impressive. It wastes the user’s time.

The good version: a proposed plan the user can review, edit, and approve before execution starts. Show the steps. Let the user reorder, remove, or add to them. Then execute. The user wants to approve the plan, not watch the thinking.

2. Autonomous Execution

What it unlocks: completing multi-step tasks without human involvement at every step. The AI does the work, not just the thinking.

The lazy version: a chat-based agent that asks permission at every single step. “Should I do this? Okay. Should I do this next? Okay.” This turns autonomy into a slower version of doing it yourself. The whole point of an agent is that it handles the steps. If you have to approve each one, you are just operating a very slow remote control.

The good version: background execution with confirmation checkpoints at meaningful decision points. The agent does the work. You approve the result. Not every intermediate step. Just the ones that matter.

3. Environment Interaction

What it unlocks: the AI does not just suggest. It acts. It books, sends, files, updates. It reaches into external systems and changes things.

The lazy version: “I have drafted an email, would you like me to send it?” Repeated for every individual action. Twelve confirmation dialogs for twelve actions. The friction of asking permission one action at a time destroys any efficiency the agent was supposed to create.

The good version: batch actions with review, clear undo capability, and sandbox-then-commit patterns. This is where trust design matters most. The gap between “AI that suggests” and “AI that acts” requires UX that gives users confidence without demanding constant supervision. Show the user what will happen. Let them approve the batch. Make it reversible.

4. Monitoring and Reaction

What it unlocks: the AI pays attention when you are not. Watching for conditions and acting proactively when they are met.

The lazy version: notification spam. Alerting on everything because the system cannot distinguish signal from noise. Fifteen notifications a day, fourteen of which are irrelevant. The user learns to ignore all of them.

The good version: smart triggers with context and action. “Your flight price dropped $200. I held the lower rate for 24 hours.” The notification is not just information. It is a completed action the user can confirm or undo. Event-driven, not attention-demanding. The AI earns trust by acting on the user’s behalf with clear, reversible actions.

The agent primitives will define the next generation of AI UX decisions. The lazy defaults are already forming. Mostly chat windows narrating agent behaviour. The teams that design better patterns now will own the category.

Smart Filters: Intent Detection Done Right

The most successful AI feature I shipped at Booking.com has no chat, no generated text, and no disclaimer.

The experience: you are searching for hotels in Paris. On the search page, there is a Smart Filters text field. You type “hotels with pool and gym.” You tap “Find properties.” What comes back is not a paragraph of AI-generated recommendations. It is the existing filter UI. Checkboxes for Swimming Pool and Fitness Center, pre-selected. Property type set to Hotels. 42 results.

That is it. The AI understood what you meant and translated it into the structured actions the product already supports. Here is why it works:

The AI does not replace the interface. It accelerates it. The filters already existed. Users already knew how to use them. The AI just fills them in faster than the user could manually. The user ends up in the exact same place, with the same controls, the same mental model. Just faster.

The output is verifiable at a glance. Did it understand “pool”? Swimming Pool is checked. “Gym”? Fitness Center is checked. Verification takes one second. Compare that to a chatbot response: “Based on your preferences, I recommend these hotels...” followed by a generated list you have no way to audit for completeness or accuracy.

It is editable without starting over. Wrong filter? Uncheck it. Missing one? Add it. The user stays in control without having to rephrase a question and hope the AI gets it right the second time. Compare this to the regeneration loop of a chatbot, where your only option when the output is wrong is to try again with different words.

Failure is cheap. If the AI misinterprets something, the user adjusts a checkbox. No dead end. No trust collapse. No disclaimer needed, because the output is not generated content. It is a filter selection the user can see and fix instantly.

It uses the right primitive. This is intent detection. The AI’s job is to understand what the user means and translate it to structured action. A chatbot would have used dialog management and generation. Those are the wrong primitives for this problem. The user does not want a conversation about hotels. They want 42 results that match their criteria.

The question that led to this feature was not “how do we add AI to search?” It was “users know what they want, but finding the right combination of filters from a list of 200+ takes too long. Can we solve that?” The primitive (intent detection) matched the problem (translating fuzzy preferences into structured filters). The UX followed from there.

The Real Problem

The lazy UX problem is a lazy thinking problem. Teams jump from “we have AI” to “ship a chatbot” without asking what capability they have and what user problem it solves.

Eleven primitives across two tiers. Each one unlocks UX patterns that were previously impossible. Each one has a lazy default that teams reach for because it is easy, not because it is right.

The builders who win will match the primitive to the problem and design the interface that primitive deserves.

A text field that turns into checkboxes. Intent detection with the right UX. No chat. No generated paragraphs. No disclaimer. Just the existing interface, faster.

Start with the user problem. Identify the primitive. Design the UX it deserves.

So much grounding advice here in the middle of AI hype, aka Chatbot proliferation.

Thanks for sharing, Pranav!